Update: I now have two lights, to give a more even source of light I use this one, which is really cheap, from .uk - and no doubt there is something similar on. You need an even source of light placed over the board. The algorithms work best if the chessboard has a colour that is a long way from the colour of the pieces! In my robot, the pieces are off-white and brown, and the chess board is hand-made in card, and is a light green with little difference between the "black" and "white" squares.Įdit : I have now painted the brown pieces matt black, which makes the algorithm work under more variable lighting conditions. As stated previously, this is all we need to do in order to determine what the human player's move was. The minimum of these means for any white square is greater than the maximum of the means across any black square, and so we can determine the piece colour for occupied squares. On the initial board we calculate for each white square, for each of R, G, B, the mean (average) value of its pixels (other than those near the borders of the square). Having determined the threshold value for empty versus occupied squares, we now need to determine the piece colour for occupied squares: The maximum standard deviation for any empty square is much less than the minimum standard deviation for any occupied square, and this allows us, after a subsequent player move, to determine which squares are empty. We compute the standard deviation for each of the three RGB colours for each square across all its pixels (other than those near the borders of the square). On the initial board set-up we know where all the white and black pieces are and where the empty squares are.Įmpty squares have much less variation in colour than have occupied squares. We can now consider the image in terms of chessboard squares. The player has then to tell the robot what the promoted piece is. The robot checks that the human's move is correct, and informs them if it isn't! The only case not covered is where the human player promotes a pawn into a non-queen. This covers all cases, including castling and en passant.

Lego chess pc code#

Because the robot knows where all the pieces are after the computer move, then all that has to be done after the human makes a move is for the code to be able to tell the difference between the following three cases: However, the camera is far enough away so that, after cropping, this distortion is not significant. There is distortion in the image because the edges of the board are further away from the camera than the centre of the board is. The chessboard squares need to look square!. The code crops and rotates this so that the chessboard exactly fits the subsequent image.

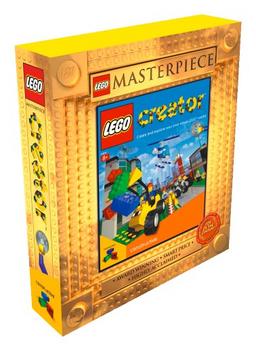

You can also use Rebrickable: open an account, upload the LDD file and from that get a list of sellers.Īfter the player has made their move, the camera takes a photo. However, they will not have everything you need, and the bricks may take a couple of weeks or more to arrive. You can get bricks direct from the LEGO shop, and this is the cheapest way to obtain them. When you have the Lego Digital Designer file, then there is the question of getting the LEGO pieces. The BrickPi attaches to the Raspberry Pi and together they replace the LEGO Mindstorms NXT or EV3 Brick. In order to interface the RPi to the Lego, I use BrickPi3 from Dexter Industries. The original build uses the Lego Mindstorms EV3 processor, but I use a Raspberry Pi, which makes it easy to use Python.Ĥ.

And then when the crane was lowered it would often jam, so I added a Watt's linkage to prevent that.Ībove is the grabber in action, showing the modified linkage.Ģ. The gears slipped, so I added additional Lego pieces to prevent that.

I modified the robot in a couple of ways.ġ.

Lego chess pc how to#

I based my robot on " Charlie the Chess Robot" (EV3 version) by Darrous Hadi, info on that page says how to get the build instructions. As I previously indicated, the heart of the vision code will work with a variety of builds.